For digital marketers, publishers, and enterprise brands navigating the fiercely competitive landscape of 2026, the singular, most critical objective is understanding precisely how to show up in AI Overviews SEO. The digital marketing ecosystem has experienced a seismic and irreversible paradigm shift, transitioning rapidly from an era defined by ten blue hyperlinks to an environment completely dominated by generative artificial intelligence. Enterprises and digital strategists are being forced to entirely rethink their operational frameworks to adapt to these new, highly complex algorithms.

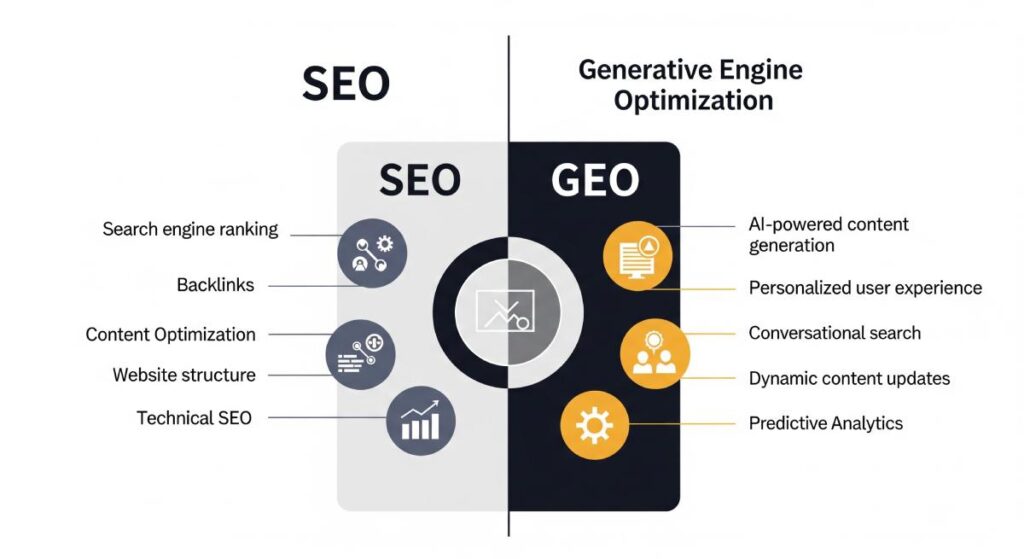

In this new era, traditional optimization methodologies have officially been superseded by Generative Engine Optimization (GEO). Rather than merely matching search queries to indexed pages based on keyword density and legacy backlink profiles, modern search engines have transformed into sophisticated answer engines that synthesize instantaneous, comprehensive responses directly on the results page.

This profound technological evolution dictates that securing the top organic ranking position on a search engine results page no longer guarantees user visibility, brand engagement, or inbound traffic. AI-generated summaries frequently dominate the most valuable, above-the-fold real estate on the screen, successfully satisfying user intent before a single organic click occurs. As a direct consequence, the overarching digital strategy for any forward-thinking brand must pivot away from merely hunting for algorithmic keyword matches and instead focus on becoming the definitive, unassailable source of truth that artificial intelligence models are mathematically compelled to cite.

Brands that successfully secure citations and direct mentions within these generative responses capture a disproportionate share of the remaining clicks, transforming visibility from a metric of sheer volume into a highly refined metric of hyper-qualified trust.

However, this transition introduces substantial friction, operational challenges, and genuine existential threats for content creators and online businesses. For agencies and in-house teams, understanding exactly what are the negative impacts of AI overviews on SEO? because it becomes the foundational step in mitigating the severe risks associated with traffic loss and revenue deprecation. Analytical data across the digital spectrum indicates that the presence of AI-generated summaries is directly linked to a devastating 25% drop in publisher referral traffic for informational queries. Major digital publishing platforms, such as Mail Online, have reported shocking click-through rate reductions of up to 56.1% on desktop devices and 48.2% on mobile devices when an AI summary is present, even in scenarios where the publisher’s website miraculously maintains the number one organic ranking in the traditional results beneath the summary.

The friction between search algorithms and content creators has escalated into unprecedented legal and structural battles. Educational technology platforms, most notably Chegg, experienced severe subscriber declines—dropping by 14% year-over-year—after their proprietary, meticulously crafted content was utilized by search algorithms to generate direct answers without compensation or adequate click-through attribution. This loss of essential traffic prompted Chegg to file a lawsuit against Google, accusing the search giant of unfair competition and the unauthorized utilization of resources. Simultaneously, Google executives have publicly acknowledged the immense difficulty of addressing publisher concerns.

During a February 2026 publishing conference, Google’s managing director for news and books partnerships in Europe stated that allowing publishers to opt out of AI Overviews without losing their traditional search visibility constitutes a “huge engineering project,” highlighting the deeply entangled nature of modern search architecture. Despite this friction, major publishers like the Financial Times have opted for lucrative content licensing deals, pairing their proprietary data with AI models to ensure financial survival.

To successfully secure visibility amidst this chaos, one must comprehend the mathematical and linguistic frameworks underpinning modern answer engines. The historical reliance on exact-match keywords has entirely given way to the complex intricacies of semantic search, a technology that evaluates the profound contextual meaning of queries rather than processing them as isolated strings of text. Semantic search systems fundamentally alter content retrieval by converting both the user’s query and the publisher’s content into multi-dimensional numerical vectors, mapping their relationships in a vast conceptual space to deliver highly relevant answers regardless of the specific vocabulary utilized. This means that the algorithm is no longer searching for the word a user typed; it is searching for the multidimensional concept the user intended to explore.

This vector-based approach fundamentally alters the baseline requirements for content creation. Algorithms no longer scan entire domains to assign a broad, generalized authority score; instead, they process content in highly granular, easily extractable blocks. The systems typically analyze segments of around 800 tokens to isolate direct, factual answers. When a paragraph is determined to be semantically complete—meaning it stands entirely on its own logically without requiring surrounding context—it becomes exponentially more likely to be selected for a generative summary. The architecture of the page must therefore be modular, allowing the machine to pull exact components without fracturing the grammatical or logical integrity of the sentence.

Furthermore, the fundamental backbone driving these generative summaries relies heavily on sophisticated natural language processing paradigms, which empower machines to comprehend, interpret, and generate human language with unprecedented accuracy. Modern large language models (LLMs) utilize self-supervised learning across billions or even trillions of parameters to deeply understand the nuanced, interconnected relationships between different entities, establishing a robust factual consensus that dictates which sources are mathematically elevated and which are discarded as statistical noise.

By leveraging advanced natural language processing, Google’s algorithms dynamically cross-reference publisher claims against recognized academic repositories, governmental databases, and established entity knowledge graphs. This real-time factual verification ensures that only the most reliable, precise, and structurally sound information is served to the end user. Consequently, the optimization objective for brands shifts entirely toward ensuring that every published page serves as a pristine, scannable, and unassailable node of information within the broader semantic web. If a brand fails to format its data for machine extraction, it will be rendered entirely invisible in the generative era.

Dominate the Generative Search Landscape with Rankzol

The transition from traditional web search to generative answer engines represents the greatest redistribution of digital wealth in the history of the internet. Brands that fail to adapt to vector embeddings, multimodal schema markup, and entity knowledge graph optimization will watch their organic traffic plummet as AI summaries capture their audience. You cannot afford to rely on outdated, pre-2024 tactics. It is time to future-proof your digital revenue. Rankzol provides the elite, enterprise-grade Generative Engine Optimization strategies required to force AI algorithms to cite your brand. Do not let your competitors become the default answer. Partner with Rankzol today, leverage our proprietary optimization frameworks, and secure your dominance in the zero-click search economy.

Transform your website into an unassailable entity by booking your strategic consultation with Rankzol now.

The Paradigm Shift: Classic SEO vs. Generative Engine Optimization

The profound transition from classic search engine indexing protocols to sophisticated AI-driven summaries requires a fundamental recalibration of success metrics, content production workflows, and overarching operational strategies. Historically, digital visibility relied on a highly predictable, linear relationship: digital marketers would optimize a specific page for exact-match keywords, acquire a high volume of external backlinks to artificially boost Domain Authority, and secure a top-three ranking to guarantee a predictable influx of organic clicks.

In 2026, this linear model is functionally obsolete. The correlation between traditional metrics, such as Domain Authority, and AI summary citations has plummeted to a minimal r=0.18, indicating that legacy link-building metrics hold drastically reduced influence over generative engines. Remarkably, deep industry analysis reveals that 47% of all AI citations are currently drawn from pages that rank below position #5 in classic organic results. This startling data demonstrates that AI models are not simply scraping the top-ranked pages to form their answers; they are employing a sophisticated “fan-out” technique to retrieve the most semantically complete and factually accurate passages from across the entire web, regardless of their classic hierarchical position.

The table below outlines the primary operational and philosophical differences between legacy optimization strategies and the rigorous modern requirements of Generative Engine Optimization:

| Strategic Element | Traditional SEO Frameworks (Pre-2024) | Generative Engine Optimization Strategies (2026) |

| Primary Digital Goal | Maximize broad search volume and drive high quantities of organic clicks to landing pages. |

Secure brand mentions, entity citations, and direct algorithmic trust within AI-generated summaries. |

| Core Authority Signal | Domain-level metrics, total backlink volume, and anchor text distribution. |

Page-level E-E-A-T, explicit author credentials, first-hand experience, and verifiable Tier-1 citations. |

| Content Architecture | Long-form narratives and sprawling articles designed to maximize user dwell time and ad impressions. |

Modular, highly extractable semantic chunks specifically targeting 127–156 words per passage. |

| Algorithmic Evaluation | Keyword density, lexical matching, and exact-phrase inclusion within H1s and meta tags. |

Vector embedding alignment, conceptual density, and achieving a cosine similarity > 0.88. |

| Rich Media Strategy | Images utilized primarily for aesthetic engagement and breaking up walls of text. |

Comprehensive multi-modal integration seamlessly combining text, precise schema, and 60-90s vertical video. |

| Key Performance Metric | Traditional ranking positions, click-through rate (CTR), and overall organic traffic volume. |

Information gain, AI Overview presence rate, brand search volume, and generative citation frequency. |

This structural pivot strongly underscores the absolute necessity of optimizing for “Information Gain.” Artificial intelligence systems are specifically engineered to filter out redundant, commoditized content that merely echoes existing search results. To achieve visibility, content must introduce entirely original research, distinct analytical frameworks, highly verifiable data points, or unique first-hand experiences that the algorithm cannot readily source from a competitor’s domain. If an article reads like a generic summary of the top three search results, the AI will ignore it entirely, viewing it as mathematically redundant.

The Anatomy of the February 2026 Discover Core Update

To fully comprehend how to optimize for AI interfaces, one must examine the specific regulatory updates Google continuously deploys to train its models. The criteria for demonstrating algorithmic authority were significantly sharpened during Google’s massive February 2026 Discover Core Update. This profound algorithmic adjustment focused acutely on the underlying quality, originality, and local relevance of content surfaced to users, serving as a direct mechanism to combat the explosive proliferation of low-effort, mass-produced text.

The February 2026 update explicitly and aggressively targeted “thin AI content”. Search algorithms are not fundamentally penalizing the use of artificial intelligence in content creation; rather, they are systematically devaluing content that completely lacks differentiation, original insight, and substantive topical depth. Domains that relied heavily on programmatic, lightly edited summaries designed solely to capture long-tail keywords experienced devastating visibility declines, losing vast swaths of their organic footprint overnight.

Conversely, the core update aggressively rewarded websites exhibiting profound, demonstrable “Topical Authority”. Modern ranking systems are now hyper-tuned to identify and evaluate expertise on a strict topic-by-topic basis. For instance, Google specifically noted that a dedicated local news domain with an extensive, historically deep gardening section possesses the contextual authority to rank for complex horticultural queries, whereas a massive, generic movie review site that happens to publish a singular, isolated article on the exact same gardening topic will be entirely ignored by the algorithm due to a lack of topical density. This dynamic requires organizations to build comprehensive topic clusters, meticulously interconnecting their pages with strategic internal links to signal vast subject matter expertise to the crawling mechanisms.

Furthermore, the core update prioritized deep geographic alignment, intentionally surfacing more locally relevant content based on the user’s specific country and region. It also introduced uncompromising penalties for sensationalism and clickbait, explicitly recommending that digital publishers avoid manipulative psychological tactics, exaggerated preview details, and the intentional withholding of crucial information in titles, meta descriptions, and featured images. Webmasters must ensure that all headlines accurately and transparently capture the exact essence of the underlying content, as the algorithm now heavily favors neutral, factual, and highly reliable framing over emotional manipulation.

Because core updates prompt significant re-weighting of complex algorithmic signals, traffic volatility is an expected byproduct. Industry best practices heavily suggest observing data patterns for a minimum of 14 days post-rollout before executing aggressive structural site changes, mass page deletions, or reactionary content overhauls.

Deconstructing the 7 Core Ranking Factors for AI Overviews in 2026

To systematically conquer generative search interfaces, digital publishers must flawlessly align their content architecture with the specific mathematical drivers that govern machine learning models. Extensive industry analysis of thousands of distinct AI citations reveals seven core ranking factors that strictly dictate inclusion in generative summaries. Mastering these seven pillars is the absolute foundation of modern SEO.

1. Semantic Completeness (r=0.87 correlation)

Identified as the single most critical ranking factor in the modern algorithm, semantic completeness dictates whether a specific passage provides a self-contained, holistic answer to a user’s query without necessitating external context or preceding paragraphs to make sense. Content that achieves high scores for semantic completeness is determined to be 4.2 times more likely to be cited by the AI.

To perfectly satisfy this rigorous requirement, content creators must meticulously employ the “Island Test.” This foundational principle states that a paragraph must make total logical, grammatical, and factual sense if extracted entirely from the surrounding article and viewed in complete isolation. Therefore, webmasters must strictly avoid utilizing transitional phrases such as “as mentioned earlier,” “see the chart above,” “in the next section,” or “therefore” as these relative references severely confuse extraction algorithms. A semantically complete passage should always feature an inverted pyramid structure: delivering a direct, 20-to-30-word answer immediately at the onset of the paragraph, followed by necessary definitions, empirical data points, and a highly concise conclusion, all strictly contained within an optimal span of 127 to 156 words. Passages exceeding 167 words begin to lose vector focus and are frequently truncated or ignored.

2. Multi-Modal Content Integration (r=0.92 correlation)

The seamless integration of diverse, rich media formats into a unified digital experience represents the most significant new ranking factor in 2026. Artificial intelligence algorithms heavily favor content that substantiates textual claims with compelling visual and auditory evidence. Pages that seamlessly combine highly structured text, high-resolution imagery, targeted video content, and their corresponding structured data schemas experience a staggering 156% higher selection rate, with full integration delivering an astonishing 317% increase in citation selection rates compared to text-only pages.

Short-form video, specifically, has become an exceptionally potent tool for generative visibility. Search engines exhibit a highly documented preference for concise, 60-to-90-second vertical videos that efficiently explain complex, technical concepts. These precise visual assets perfectly match diminished user attention spans and drastically improve page retention metrics, which feeds positive user experience signals back to the core algorithm. Crucially, publishers must utilize deep VideoObject schema markup, meticulously integrating Clip or SeekToAction properties, which allow the AI bot to pinpoint, extract, and surface specific timestamps directly within the search interface.

3. Real-Time Factual Verification (r=0.89 correlation)

Generative models do not operate in a vacuum, nor do they rely solely on the data present within their initial training sets; they dynamically cross-reference textual claims against trusted academic databases, governmental repositories, and established entity knowledge graphs in real-time. Content that actively incorporates recent, verified statistics, references peer-reviewed scientific journals, and utilizes Tier-1 citations (such as highly trusted.gov or.edu domains) enjoys an 89% higher probability of algorithmic selection.

To rigorously optimize for factual verification, linguistic vagueness must be entirely eradicated from the content production workflow. General, sweeping statements such as “recent studies show,” “experts agree,” or “data suggests” are frequently filtered out due to a complete lack of substantiation. Instead, content must employ hyper-specific, granular attribution, clearly naming the exact study, the publishing institution, the lead researcher, and the specific year of publication to perfectly align with the algorithm’s sophisticated consensus-detection protocols.

4. Vector Embedding Alignment (r=0.84 correlation)

The underlying architecture of modern search infrastructure relies entirely on vector embeddings, rapidly converting human language into precise numerical coordinates within a vast, multi-dimensional conceptual space to deeply interpret user intent. This complex mathematical translation allows the engine to accurately recognize the topical proximity between a user’s vaguely worded question and a publisher’s highly specific answer.

The primary mathematical metric for this alignment is Cosine Similarity. Content that successfully achieves a cosine similarity score above 0.88 relative to the core query intent demonstrates an incredible 7.3 times higher selection rate than content scoring below the 0.75 threshold. Achieving high cosine similarity requires defining technical terminology immediately via inline definitions (using parentheses or clear phrases like “also known as”) to ensure the machine comprehensively grasps the contextual framework of the article without having to guess at acronyms or industry jargon.

5. E-E-A-T Authority Signals (r=0.81 correlation)

Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) remain absolutely foundational to algorithmic success, functioning as the overarching quality framework through which all other signals, links, and text are interpreted. Data conclusively indicates that 96% of all AI citations are derived directly from verified, authoritative sources that clearly and unambiguously project strong E-E-A-T signals.

Anonymous content, or content published under generic admin accounts, is actively suppressed in 2026. Author credentials have transitioned from a beneficial enhancement to an absolute prerequisite for visibility. Publishers must provide detailed, highly verifiable author biographies, explicit connections to recognized academic or industry institutions, and comprehensive Person schema markup to facilitate seamless entity resolution. Demonstrating true first-hand experience through unique case studies, original photography, and proprietary data prevents content from being classified as a generic, secondary summary.

6. Entity Knowledge Graph Density (r=0.76 correlation)

To truly understand what a web page is comprehensively about, AI models continuously analyze the density and relationship of recognized entities—specific people, places, organizations, historical events, or concepts that exist firmly within the overarching Google Knowledge Graph. Content containing 15 or more clearly connected entities per 1,000 words demonstrates a 4.8 times higher probability of being selected by generative models. Establishing these entity relationships often involves intentionally linking out to highly authoritative external sources to map clear, undeniable semantic pathways for the algorithm to follow.

7. Structured Data Implementation (73% selection boost)

Structured data serves as the explicit, required translation layer between human-readable content and machine-readable code. By utilizing precise Schema.org markup—such as FAQPage, HowTo, Article, ImageObject, and Product—publishers completely remove ambiguity from their content architecture. Properly structured pages receive a definitive 73% boost in AI selection rates compared to unmarked domains, as structured data drastically reduces the intense computational resources required for the AI to parse, understand, and extract the necessary information.

Architectural Formatting: Engineering Content for LLM Extraction

Even if a piece of content possesses deep, unrivaled expertise and flawless factual accuracy, it will completely fail to surface in AI summaries if the architectural structure of the HTML impedes algorithmic extraction. Formatting is absolutely not an aesthetic choice in 2026; it is a foundational pillar of technical SEO and machine learning optimization.

To engineer a page specifically for machine parsing, the content must be broken down into modular, highly scannable, bite-sized components. The traditional, academic practice of writing extensive “walls of text” is highly detrimental to AI visibility, as it forces the model to expend excess tokens deciphering the core point. Instead, paragraphs must be kept incredibly concise, with complex processes systematically broken down into distinct, numbered steps that an AI can easily list out to a user.

The most effective architectural framework is the direct answer format. Sections should immediately lead with a clear definition, actionable takeaway, or bolded summary block before elaborating with any additional context or historical background. By presenting the core factual payload at the very top of the section, publishers align perfectly with the AI’s top-down extraction priorities.

Subheadings (H2s and H3s) play a crucial, structural role in this extraction process. Rather than using creative, abstract, or purely keyword-stuffed subheadings, webmasters must craft H2s and H3s that mirror actual user questions verbatim. By matching the query structure directly in the HTML hierarchy, the algorithm can easily identify the exact segment of the page that definitively resolves the user’s intent.

Furthermore, integrating robust comparison tables, bulleted lists outlining clear pros and cons, and comprehensive FAQ sections at the bottom of articles directly caters to how large language models summarize comparative data for users. A heavy, promotional marketing tone must be entirely stripped from informational pages, as algorithms are strictly programmed to avoid citing promotional, biased language in favor of neutral, encyclopedic phrasing.

Re-engineering E-E-A-T: The Currency of Generative Trust and Wikipedia

In the age of generative models, trustworthiness is the ultimate currency. Search engines do not assign a single, explicit E-E-A-T score; rather, E-E-A-T functions as an overarching quality framework that evaluates content accuracy, author credibility, external links, and broader digital reputation.

Earning trust requires transparent actions. Publishers must cite primary sources, link out to high-authority domains, use original visuals to explain complex data, and proactively correct mistakes. Furthermore, the quality of backlinks has entirely superseded the quantity of backlinks. Earning a few highly relevant links from domains within adjacent niches serves as a genuine recommendation, whereas automated or manipulative link schemes actively undermine trust signals and invite severe algorithmic penalties.

A highly misunderstood but critical component of establishing deep entity trust is the strategic relationship with Wikipedia. While direct links from Wikipedia are tagged as “nofollow” and do not pass traditional link equity for classic SEO, Wikipedia is the absolute foundational bedrock of Google’s Knowledge Graph. Engaging in “black hat” tactics to drop promotional links on Wikipedia is useless and heavily penalized. However, utilizing Wikipedia for legitimate “white hat” entity building is incredibly powerful.

If a brand or individual is genuinely notable, creating a factual, neutral Wikipedia page (or a Wikidata entry, which has slightly less strict eligibility conditions) helps search algorithms firmly establish the brand as a recognized entity in the semantic web. Furthermore, observing Wikipedia’s internal linking structure—how it uses categories, subcategories, and vast hyperlinks to connect concepts—provides a masterclass in how to build topic clusters on your own domain. Creating a clear information hierarchy that mimics Wikipedia improves user experience and drastically helps search engines understand the exact context and depth of your content.

The Analytics Void: Tracking Generative Visibility Without Native Tools

One of the most profound, frustrating challenges introduced by the generative search era is the severe degradation of transparent analytics. Proving the return on investment for optimization campaigns has become incredibly complex due to significant, intentional blind spots within native reporting tools.

As of 2026, Google Search Console (GSC) officially includes “AI Mode” and AI Overviews in its overall search traffic data under the “Web” search type, meaning impressions and clicks are counted, but they are entirely mixed with traditional 10-blue-links data. Search Console provides no distinct dimension, toggle, or filter for AI Overview presence.

More critically, when a brand’s content gets cited merely as a source within an AI Overview—driving massive brand visibility, psychological trust, and authority signals without resulting in a direct click—Search Console fails to track the citation entirely. This lack of granular data leaves businesses, agencies, and in-house SEO teams struggling to justify budgets and measure the true impact of their generative strategies.

Because native platforms obfuscate this vital data, organizations must implement sophisticated external tracking methodologies to monitor their generative visibility.

The Manual Data Processing Framework

For organizations lacking specialized enterprise software, manual SERP (Search Engine Results Page) monitoring remains a highly reliable, albeit incredibly labor-intensive, method for tracking visibility.

-

Strict Keyword Categorization: Webmasters must compile a prioritized list of core target keywords, meticulously categorizing them by search intent (e.g., informational vs. navigational). Informational queries typically trigger AI Overviews at a significantly higher rate than transactional ones.

-

Manual SERP Verification: SEO teams must manually query these keywords to document exactly whether an AI Overview appears and actively log whether their specific domain is cited within the summary source carousels.

-

Complex Data Processing: By exporting GSC data from the previous week and matching it against the manual tracking sheet using VLOOKUP functions, data analysts can begin to calculate critical custom metrics.

-

Custom Metric Calculation: Teams can calculate the “AI Overview Presence Rate” (the total percentage of tracked keywords that trigger a generative summary) and the highly coveted “Citation Rate” (the specific percentage of those summaries that actively include the brand’s link).

-

CTR Comparison Analysis: By utilizing pivot tables, analysts can deeply compare the average click-through rates of queries that feature AI summaries versus those that do not, clearly and definitively isolating the massive traffic impact of the generative features.

Advanced Third-Party Visibility Platforms

Relying strictly on manual spreadsheets is absolutely not scalable for enterprise operations or large agencies. Consequently, the digital marketing industry has seen a rapid proliferation of advanced third-party visibility platforms designed specifically to monitor generative engines.

Tools embedded within major platforms like Semrush now offer dedicated SERP Features filters within their Position Tracking modules. This highly advanced feature allows users to automatically identify which monitored keywords consistently trigger AI Overviews and dynamically track whether their target pages are included in the source citations.

Furthermore, dedicated AI search visibility platforms, such as Wellows, have emerged in 2026 to provide deep historical performance monitoring, API integrations, and comprehensive, automated client reporting. These specialized platforms track a brand’s representation over vast periods of time, continuously monitoring exactly where citations appear and highlighting where critical brand mentions are missing. By offering WordPress integrations and deep API connections with Google Search Console, these platforms seamlessly integrate into an agency’s existing workflow, providing a holistic, unclouded view of an organization’s true digital footprint in the AI era.

The Economic Shift: Zero-Click Realities and Agent-to-Agent (A2A) Commerce

The rapid evolution toward generative summaries fundamentally and permanently alters the economic model of the internet. The lucrative era of securing “easy clicks” by publishing thin content to answer basic, generic questions is permanently over. Generative interfaces are designed specifically to retain the user on the search engine’s proprietary platform, thereby transforming zero-click searches from an occasional anomaly into the new, undeniable operational standard.

However, digital strategists must realize that fewer clicks do not inherently signify less commercial value; rather, they represent a profound redefinition of the value exchange. Traffic generated by AI tools, advanced LLMs, and generative agents often carries exceptionally high intent. Recent industry data sets suggest that referral traffic originating from tools like ChatGPT or Perplexity can convert at rates up to five times higher than traditional web search traffic. This incredible metric indicates that while the absolute volume of sheer visitors may decrease, the overall quality, trust level, and commercial readiness of the retained audience increases significantly.

To successfully adapt to this zero-click normalization, digital strategies must ruthlessly prioritize brand mentions and undeniable entity establishment. Implementing a comprehensive “Search Everywhere” strategy is essential. This involves building a robust, authentic presence across varied digital communities, authoritative industry forums, platforms like Reddit, and video ecosystems like YouTube, as generative algorithms increasingly scrape these highly diverse ecosystems to construct their answers. When an AI system consistently associates a specific brand entity with a particular commercial solution across multiple trusted platforms, that brand mathematically becomes the default recommendation embedded within the generative response.

This concept extends directly into the rapidly emerging frontier of Agent-to-Agent (A2A) commerce. In the very near future of digital retail, purchasing decisions will not just be influenced by AI; they will be executed entirely by AI. Personal AI agents operating autonomously on behalf of consumers will interact directly with commercial AI agents operating on behalf of retailers. In an A2A ecosystem, visual website aesthetics, persuasive copywriting, and emotional branding become entirely irrelevant. The only factors that will successfully facilitate a transaction are flawless JSON structured data, unassailable semantic completeness, and absolute real-time factual accuracy regarding inventory levels, precise pricing, and detailed product specifications. E-commerce platforms that fail to structure their data specifically for machine-to-machine extraction will be entirely invisible to the automated purchasing algorithms of the future, essentially ceasing to exist in the digital economy. Platforms like Shopify are currently gaining a massive advantage by integrating these machine-readable structures directly into their core architecture.

Bottom Call to Action: Secure Your Future Search Dominance with Rankzol

The algorithms of 2026 operate on a strict foundation of trust, factual consensus, and mathematical vector alignment. Navigating the immense complexities of vector embeddings, multimodal schema markup, real-time factual verification, and advanced entity graph development requires dedicated expertise and cutting-edge analytical tools. You cannot leave your digital visibility to chance in an era where AI dictates the winners and losers of internet traffic.

RankZOL provides the comprehensive frameworks, elite optimization strategies, and precise data execution necessary to completely transform standard websites into authoritative, undeniable entities that generative models are forced to cite. Organizations looking to aggressively future-proof their digital revenue streams and conquer the complexities of AI-first algorithms must take immediate action. Elevate your brand, capture high-intent AI referral traffic, and dominate the generative SERP. Partner with Rankzol today and transform your SEO strategy.

To conclude, succeeding in the modern digital landscape requires an absolute departure from legacy metrics. Content must be modular, highly extractable, incredibly dense with original insights, and backed by verifiable, real-world credentials. By understanding the technological drivers of generative models, implementing rigorous structural formatting, and prioritizing profound topical depth over shallow keyword targeting, domains can secure their positions as undeniable authorities. Embracing this architectural evolution is the definitive, unassailable blueprint for any entity intent on mastering how to show up in AI Overviews SEO.

Frequently Asked Questions

1. What are the most important ranking factors for AI Overviews in 2026?

The core ranking factors for generative engine optimization focus heavily on semantic completeness (providing a self-contained answer without needing surrounding context), multi-modal content integration (combining text, advanced schema, and video), real-time factual verification using authoritative Tier-1 citations, and strong E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) authority signals.

2. How does the “Island Test” help content rank in AI summaries?

The “Island Test” is a critical optimization technique ensuring that a specific paragraph makes complete logical, grammatical, and factual sense if extracted entirely from the surrounding article. To pass this test, the passage must strictly avoid relative references like “as mentioned above” and provide a direct answer, making it highly suitable for an AI algorithm to seamlessly extract and present in a summary without breaking the context.

3. Has Domain Authority lost its importance in the new algorithmic landscape?

Yes, the mathematical correlation between traditional Domain Authority and AI Overview citations has dropped significantly to a minimal level (r=0.18). Generative algorithms heavily prioritize page-level semantic relevance, entity density, and factual accuracy, evidenced by the staggering fact that 47% of all AI citations now come from pages ranking below position #5 in classic organic results.

4. How did the February 2026 Google Core Update impact generative search?

The massive February 2026 Discover Core Update specifically targeted and devalued thin, unoriginal AI-generated content that was mass-produced solely to cover keywords. Conversely, the update heavily rewarded domains demonstrating true topical authority, profound local relevance, and in-depth, original research, while actively penalizing and reducing the visibility of sensationalism and clickbait tactics.

5. Why is video content so important for AI Overview visibility?

AI algorithms heavily favor multi-modal content because it provides a richer user experience. Including short-form video (specifically 60 to 90 seconds in length) that quickly explains complex concepts matches shorter user attention spans and significantly increases page retention. Properly integrating this video with deep VideoObject schema provides up to a 317% selection rate boost for AI-generated results.

6. Can I track my AI Overview visibility in Google Search Console?

Currently, there is no direct, native dimension or specific filter in Google Search Console to track AI Overview presence independently. GSC groups AI Mode impressions and clicks together with traditional web search data. To track specific generative visibility, webmasters must use manual SERP checking methodologies or rely on advanced third-party tools like Semrush and Wellows.

7. What is Semantic Search and how does it relate to vector embeddings?

Semantic search aims to understand the searcher’s true intent and the contextual meaning of a query, rather than just matching literal lexical keywords. It achieves this by mathematically converting words and entire sentences into multi-dimensional numerical vectors. The engine then measures the “cosine similarity” between the query’s vector and the content’s vector to strictly determine relevance and ranking.

8. How should I strictly format my content to maximize extraction by AI engines?

Content should strictly avoid long, academic “walls of text” and instead be broken down into scannable, modular blocks. Webmasters should utilize an inverted pyramid structure (providing a direct 20-30 word answer immediately), format subheadings (H2s/H3s) as direct user questions, and incorporate bulleted lists, numbered steps, and highly structured comparison tables.

9. Why is author identity and E-E-A-T critical for generative visibility?

AI systems are programmed to prioritize verifiable expertise to prevent the rapid spread of misinformation and AI hallucinations. Anonymous content is heavily suppressed. To be cited, content must feature detailed author biographies, demonstrable first-hand experience, explicit professional connections to industry organizations, and comprehensive Person schema markup to establish a clear digital entity.

10. What is Agent-to-Agent (A2A) commerce in the context of modern SEO?

Agent-to-Agent (A2A) commerce refers to the rapidly emerging future where personal AI agents make purchasing decisions by interacting directly with commercial retail AI agents. In this machine-to-machine ecosystem, traditional visual marketing becomes obsolete, and success depends entirely on flawless JSON structured data, rich product feeds, and real-time factual accuracy regarding inventory and specifications